You’ve been handed a product request that sounds simple enough: add property search, home values, or listing pages to a Next.js app. Then you open a few provider sites and the actual work starts. Some APIs focus on public records. Some expose listing feeds through MLS partners. Some are valuation-first. Others are built around a standard like RESO rather than a single dataset. Then there are usage restrictions, display rules, authentication quirks, stale data problems, and frontend performance issues that don’t show up in sales copy.

That’s where most real estate api guides fall short. They stop at “here are some providers” and leave out the parts that decide whether your app ships cleanly or turns into a maintenance problem. In practice, choosing the API is only the first decision. The harder ones come next: how you model inconsistent fields, where you cache volatile data, when you render on the server, and how you avoid getting blocked by rate limits or licensing terms.

For a React and Next.js team, those choices matter early. A property details page has different needs than an internal analytics dashboard. A consumer-facing listing search has different legal constraints than a private investor tool. If you mix those up, you end up rebuilding your data layer halfway through the project.

Introduction

Teams often start in the same place. Product wants listings, values, or market context in the app. Engineering wants a clean API with predictable JSON. Legal wants to know what can be displayed. Nobody wants to discover late in the sprint that the chosen provider doesn’t support the needed geography, field set, or usage model.

A real estate api isn’t one thing. It’s a category that spans listing data, public records, ownership history, deeds, mortgage information, valuations, neighborhood intelligence, and standardized MLS transport layers. If you treat all of those as interchangeable, you’ll either overpay for data you won’t use or build against an interface that doesn’t match the product.

The practical way to approach this is to think in layers:

- Data layer: What data do you need?

- Provider layer: Which vendor or standard supplies that data reliably?

- Application layer: How will Next.js fetch, cache, render, and revalidate it?

- Compliance layer: What are you allowed to store, display, and refresh?

Practical rule: Pick the API after you define the screen, not before. “Need property data” is too vague. “Need SSR property detail pages with valuation history and neighborhood context” is specific enough to guide a good choice.

The rest of the build gets easier when you’re disciplined here. Schema design improves. Caching gets simpler. Frontend components stop depending on giant raw payloads. And you avoid the expensive mistake of discovering that your app needs MLS-grade freshness but you only integrated public-record data.

Understanding Real Estate API Data Types

A search page, an investor dashboard, and a home value widget can all use a real estate api, but they do not need the same data. That decision affects everything downstream. Your TypeScript models, cache keys, page rendering strategy, and legal review all change based on the data category you choose first.

Listings and active inventory

Listing data supports the classic consumer experience. Search results, map pins, photo galleries, open house details, price changes, and agent remarks all live here. If the product needs users to browse homes that are currently for sale or rent, listings are usually the primary feed.

The catch is freshness and usage rights.

Active inventory changes often, and the display rules are stricter than many first-time integrations expect. Providers may limit how long you can cache records, what attribution must appear, whether sold data can be mixed into active results, and which fields can be shown publicly. For a Next.js app, that usually means short revalidation windows, clear separation between public listing fields and internal-only metadata, and an early compliance review before UI work gets too far.

Public records and ownership history

Public-record data is better suited to research, enrichment, and internal workflows. It usually includes assessor data, parcel facts, deed transfers, mortgage history, tax assessments, foreclosure indicators, and owner information.

That data fits products such as:

- Investor tools: owner lookup, off-market prospecting, distressed-property research

- Back-office systems: CRM enrichment, lead scoring, acquisition pipelines

- Analytics apps: historical ownership and financing patterns

From an engineering standpoint, public records are often easier to cache than active listings because they do not change minute to minute. The trade-off is accuracy for current market activity. A parcel record can be excellent for identifying a property and its history, but weak for answering whether it is actively listed right now.

Valuations and historical records

Valuation data supports estimate-driven features. Home value pages, rent estimate widgets, portfolio summaries, and trend charts all depend on this category. Historical records matter just as much as the current estimate because users usually want context. A single number without prior sale dates, value movement, or listing history is hard to trust.

This data also needs careful product framing. Automated valuations are useful for ranking, comparison, and lightweight consumer insight. They are a poor replacement for an appraisal or broker opinion, and your UI should say that clearly. In code, treat valuation fields as a separate domain model instead of stuffing them into a generic property object. That keeps components honest about what is factual, what is estimated, and what may need a last-updated label.

Neighborhood and location context

Neighborhood data answers questions that property records cannot. Commute patterns, school context, flood or fire risk, local price trends, nearby amenities, and market conditions often drive user decisions more than square footage does.

This category is also where payloads get noisy fast. Providers package location context in very different ways. One API may return a clean neighborhood summary. Another may give you dozens of nested metrics tied to census geography, school zones, and risk models. Normalize that data before it reaches React components or your frontend ends up coupled to one vendor’s response shape.

A practical rule helps here. Model neighborhood data as optional, additive context. Do not make the property detail page depend on it to render. That gives you room to defer secondary fetches, cache expensive responses longer, and degrade gracefully when a provider has gaps in certain ZIP codes.

A Developer's Comparison of Major Real Estate APIs

You start with a simple goal. Show a property page in a Next.js app. Two weeks later, the hard part is no longer rendering cards or wiring search params. It is choosing a data source that will not force a rewrite once product asks for listing freshness, school context, saved searches, or agent-facing workflows.

Provider choice affects your schema, cache strategy, error handling, and compliance work. Compare APIs by the work they create in your stack. The right question is not which brand looks strongest. The right question is which provider fits the product you are shipping in React and Next.js.

Quick comparison table

| Provider | Primary Data Focus | Coverage | Pricing Model | Best For |

|---|---|---|---|---|

| ATTOM | Public records, ownership, deeds, tax, mortgages, hazards | Nationwide U.S. coverage | Enterprise-oriented | Data-heavy PropTech products |

| Zillow API and Bridge | Valuations, historical records, listings-related datasets | Broad U.S. property coverage | Free and paid tiers, enterprise access for some use cases | Consumer-style property lookup and valuation features |

| RentCast | AVMs, active listings, rent and price trends | Nationwide U.S. property coverage | API subscription style | MVPs needing straightforward property and rental data |

| RealEstateAPI.com | Developer-focused property data | Broad U.S. coverage | Commercial subscription | Custom internal tools and bulk workflows |

| RESO-based MLS providers | Standardized MLS transport | Depends on MLS/provider relationships | Provider-specific | MLS-integrated listing apps |

ATTOM for property intelligence and enrichment

ATTOM fits products that need public-record depth more than a polished consumer experience. It is a strong option for ownership history, deed data, tax details, hazard signals, and other enrichment fields that help analysts, underwriters, and internal operations teams.

The trade-off shows up quickly in implementation. Response shapes can be large, nested, and uneven across endpoints. In a Next.js app, that usually means creating a server-side adapter before anything touches your components. Without that layer, your frontend starts depending on vendor field names and your UI becomes expensive to change.

I usually avoid ATTOM for a first MVP unless the product wins on data breadth.

Zillow for valuation-first product experiences

Zillow is a better fit when the product needs familiar valuation-oriented pages and user-friendly property context. The appeal is not only the dataset. It is the mental model users already understand. Estimated value, price history, and home facts are easier to present in a consumer app when the source aligns with those expectations.

The trade-off is access and product fit. Zillow-related data paths can be more constrained than public-record providers, and they do not replace MLS access for every listing workflow. If your roadmap includes brokerage features, syndication rules, or strict listing freshness requirements, verify those constraints before you commit your data model.

RentCast for a faster first integration

RentCast is often easier for a small team to ship with. The payloads are generally easier to work with, the product focus is clear, and it maps well to rental apps, valuation tools, and market trend features.

That simplicity matters. A junior developer can usually stand up a server-side proxy, normalize a few core fields, and get usable UI output without building a giant translation layer first. You still need to test edge cases like partial addresses, sparse history, and inconsistent media availability, but the integration burden is usually lower than enterprise-oriented providers.

RealEstateAPI.com for internal tools and custom workflows

RealEstateAPI.com tends to make sense when the product is less about a consumer portal and more about workflow automation. Lead pipelines, prospecting tools, acquisition screens, skip-trace style research, and bulk record processing are common examples.

This category works well when your backend owns the product logic. You fetch property data, map it into your own entities, and drive dashboards or internal actions from there. If that is your direction, set up server-only credentials from day one and keep every upstream call behind API routes or route handlers. A quick guide to structuring Next.js environment variables for server-side API integrations helps avoid accidental key exposure.

RESO-based MLS providers for listing-driven apps

If your app depends on MLS listings, RESO support matters more than the marketing copy on the provider site. Good RESO alignment reduces custom mapping work, makes feed changes easier to manage, and lowers the chance that one MLS-specific quirk spreads through your component tree.

This is the part many comparison posts skip. MLS data is not just another JSON source. It comes with usage rules, display requirements, and field-level constraints that shape your architecture. Choose this path when current listings are central to the product, not as a shortcut for general property data.

A practical rule helps here. Pick the provider that minimizes custom transformation, gives you a stable legal path for your use case, and matches the UI you plan to ship in the next three months, not the hypothetical platform you may build next year.

Handling API Authentication and Rate Limits

A real estate API integration usually looks fine in local development right up until the first demo account starts hitting quota or someone accidentally exposes a key in the browser bundle. Authentication and rate limits shape the architecture early. If you treat them as cleanup work, you usually end up rewriting your data layer after launch.

API keys and OAuth

Most providers use either static API keys or OAuth 2.0 tokens. The integration choice matters because it changes where state lives.

API key setups are straightforward. Keep the key on the server, call the provider from a Route Handler, Server Action, or backend worker, and never let raw credentials reach client code. If you need a reference for getting that setup right across local, preview, and production environments, this guide to structuring Next.js environment variables for server-side integrations is useful.

Basic server-side fetch pattern:

export async function GET(req: Request) {

const url = new URL(req.url)

const address = url.searchParams.get('address')

const res = await fetch(`https://api.example.com/properties?address=${encodeURIComponent(address ?? '')}`, {

headers: {

'x-api-key': process.env.REAL_ESTATE_API_KEY ?? '',

'accept': 'application/json',

},

cache: 'no-store',

})

if (!res.ok) {

return Response.json({ error: 'Upstream request failed' }, { status: res.status })

}

const data = await res.json()

return Response.json(data)

}

OAuth adds operational overhead. Access tokens expire, refresh tokens can fail, and some providers scope tokens differently between sandbox and production. Put token fetch and refresh logic in one server-side module, cache the active token for its valid lifetime, and make every upstream caller use that shared path. That avoids the common bug where three concurrent requests all try to refresh at once and one of them wins by accident.

Rate limiting and 429 handling

Real estate APIs vary a lot here. Some providers publish hard per-minute or per-hour caps. Others just start returning 429 Too Many Requests once your app gets noisy. Build for the second case even if the docs look generous.

A retry helper with backoff is the minimum:

async function fetchWithBackoff(url: string, init?: RequestInit, retries = 3) {

let attempt = 0

while (attempt <= retries) {

const res = await fetch(url, init)

if (res.status !== 429) return res

const delay = Math.min(1000 * (attempt + 1), 5000)

await new Promise((resolve) => setTimeout(resolve, delay))

attempt++

}

throw new Error('Rate limit exceeded after retries')

}

That helper is fine for a first pass, but production apps usually need two more protections. First, respect the provider's Retry-After header if it exists. Second, add jitter so many identical retries do not stampede the API at the same moment.

The practical rules are simple:

- Centralize outbound calls: one server path makes quotas easier to observe and enforce.

- Collapse duplicate requests: if five users request the same property details, your server should reuse one upstream response when possible.

- Set per-route budgets: search autocomplete, listing pages, and back-office imports should not share the same request allowance.

- Queue bulk work: enrichment jobs and sync tasks should run outside the request-response path.

- Log provider failures separately from app errors: a spike in

429or401responses usually points to quota or credential issues, not a React bug.

One more trade-off matters. Aggressive retries can improve success rates for interactive requests, but they also increase total upstream volume. For user-facing pages, keep retry counts low and fail fast with a cached or partial response if you have one. For background imports, slower retries are usually the better choice.

Integrating Real Estate Data in a Next.js App

The cleanest integration pattern is usually this: browser talks to your Next.js app, your Next.js app talks to the property data provider. That gives you one place to enforce auth, trim payloads, normalize fields, cache responses, and handle provider outages.

Build a thin server-side adapter

Don’t expose raw provider responses directly to React components. Instead, create an internal endpoint that maps external fields into your app’s model.

Example Route Handler:

import { NextResponse } from 'next/server'

export async function GET(req: Request) {

const { searchParams } = new URL(req.url)

const query = searchParams.get('query') ?? ''

const upstream = await fetch(`https://api.example.com/search?query=${encodeURIComponent(query)}`, {

headers: {

'x-api-key': process.env.REAL_ESTATE_API_KEY ?? '',

},

next: { revalidate: 300 },

})

if (!upstream.ok) {

return NextResponse.json({ error: 'Search failed' }, { status: upstream.status })

}

const raw = await upstream.json()

const results = (raw.results ?? []).map((item: any) => ({

id: item.id,

address: item.address?.full ?? '',

city: item.address?.city ?? '',

state: item.address?.state ?? '',

postalCode: item.address?.zip ?? '',

price: item.listPrice ?? null,

bedrooms: item.beds ?? null,

bathrooms: item.baths ?? null,

photo: item.primaryPhoto ?? null,

status: item.status ?? 'unknown',

}))

return NextResponse.json({ results })

}

That adapter layer is boring work, but it’s where maintainable integrations are won.

Use SWR for interactive search UIs

For search boxes, map filters, and dashboards, client-side fetching with SWR keeps the UI responsive without making you hand-roll cache and revalidation logic. If you want a broader React pattern refresher, this guide on consuming REST APIs in React covers the core flow well.

'use client'

import useSWR from 'swr'

import { useState } from 'react'

const fetcher = (url: string) => fetch(url).then((res) => res.json())

export function PropertySearch() {

const [query, setQuery] = useState('')

const { data, isLoading, error } = useSWR(

query ? `/api/properties/search?query=${encodeURIComponent(query)}` : null,

fetcher

)

return (

<div>

<input

value={query}

onChange={(e) => setQuery(e.target.value)}

placeholder="Search address, city, or ZIP"

/>

{isLoading && <p>Loading results...</p>}

{error && <p>Could not load results.</p>}

<ul>

{data?.results?.map((property: any) => (

<li key={property.id}>

<strong>{property.address}</strong>

<div>{property.city}, {property.state} {property.postalCode}</div>

<div>{property.price ?? 'Price unavailable'}</div>

</li>

))}

</ul>

</div>

)

}

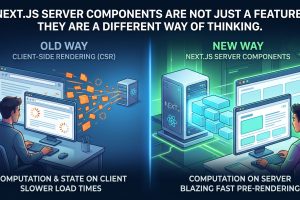

Use server rendering for public property pages

Property detail pages usually benefit from server rendering because they’re shared, indexed, and loaded directly. You want stable HTML on first load and a controlled fetch path.

export default async function PropertyPage({ params }: { params: { id: string } }) {

const res = await fetch(`${process.env.APP_URL}/api/properties/${params.id}`, {

cache: 'no-store',

})

if (!res.ok) {

throw new Error('Failed to load property')

}

const property = await res.json()

return (

<main>

<h1>{property.address}</h1>

<p>{property.city}, {property.state} {property.postalCode}</p>

<p>{property.price ?? 'Contact for price'}</p>

</main>

)

}

A useful demo can help before you start wiring the full flow:

What works and what doesn’t

What works in production:

- Internal API routes: one contract for your frontend

- Response shaping: send only fields the component needs

- Clear loading and error states: provider hiccups are normal

- Split rendering strategy: SSR for public pages, CSR for highly interactive filters

What usually goes wrong:

- Passing raw provider payloads into components

- Fetching directly from client components with secret-bearing headers

- Building pages around provider field names instead of app field names

- Ignoring empty-field scenarios, which are common in property data

Modeling API Data with TypeScript for React Components

Real estate responses get messy fast. You’ll see nested addresses, optional valuation objects, arrays of historical records, status codes that vary by source, and field names that look similar but mean different things. If you use any, that confusion spreads through the whole app.

Start with your UI model, not the upstream payload

This is the key habit. Don’t mirror the provider response one-to-one unless you’re building an SDK. Define the types your React components need, then map into those types at the boundary.

If you want a broader foundation for strong typing in component-driven apps, this walkthrough on TypeScript with React is a useful companion.

export interface Address {

line1: string

city: string

state: string

postalCode: string

}

export interface PropertySummary {

id: string

address: Address

price: number | null

bedrooms: number | null

bathrooms: number | null

status: string

primaryPhotoUrl: string | null

}

export interface PropertyDetail extends PropertySummary {

description?: string

squareFeet?: number | null

yearBuilt?: number | null

valuation?: {

estimate: number | null

lastUpdated?: string

}

}

Map raw data explicitly

The transformation function is where you absorb provider weirdness:

export function toPropertySummary(raw: any): PropertySummary {

return {

id: String(raw.id ?? ''),

address: {

line1: raw.address?.full ?? raw.address?.line1 ?? '',

city: raw.address?.city ?? '',

state: raw.address?.state ?? '',

postalCode: raw.address?.zip ?? '',

},

price: raw.listPrice ?? null,

bedrooms: raw.beds ?? null,

bathrooms: raw.baths ?? null,

status: raw.status ?? 'unknown',

primaryPhotoUrl: raw.primaryPhoto ?? null,

}

}

That small layer gives you autocompletion, cleaner prop contracts, and far fewer “cannot read property of undefined” errors.

Typed adapters are more valuable than typed components. If your data boundary is sloppy, the rest of the app inherits the problem.

Use typed props for presentation components

type PropertyCardProps = {

property: PropertySummary

}

export function PropertyCard({ property }: PropertyCardProps) {

return (

<article>

<h2>{property.address.line1}</h2>

<p>{property.address.city}, {property.address.state} {property.address.postalCode}</p>

<p>{property.price ?? 'Price unavailable'}</p>

</article>

)

}

A good rule for junior developers is simple: if a component only needs six fields, its prop type shouldn’t accept a giant provider response with fifty nested keys.

Advanced Caching for High-Performance Real Estate Apps

You ship a property search page, traffic picks up, and the app starts burning through API quota on repeated searches for the same ZIP code, price range, and beds filter. At the same time, a listing detail page shows yesterday’s price because the cache is too aggressive. Real estate caching fails in one of two ways. It either misses too often and gets expensive, or it hits too long and shows stale data.

A good setup treats listing data by how it changes, not by which component renders it. Price, status, and open house timing need short lifetimes. Building facts, school names, and tax history can usually sit longer. Search result pages sit in the middle because they are expensive to generate and heavily repeated, but they still need regular refreshes.

Use multiple caching layers

For a Next.js app, I usually separate caching into three jobs:

- Client cache: SWR or React Query for deduping requests in the browser and making back-and-forth navigation feel fast

- Server cache: route-level caching or Redis for shared upstream responses

- Page cache: ISR or revalidation for public pages that get repeat traffic

Each layer solves a different problem. Client caching improves UX inside a session. Server caching cuts duplicate upstream calls across users. Page caching lowers render cost for pages that many visitors request in roughly the same shape.

Match cache policy to data volatility

Do not give every response the same TTL. That is the fastest way to get both stale pages and unnecessary API spend.

A practical default looks like this:

| Data type | Good default |

|---|---|

| Property price and listing status | Short server cache. Trigger refresh from webhooks or internal change events if available |

| Public records and structural details | Longer cache window |

| Search results | Cache by normalized query and filter set |

| Public property pages | ISR or on-demand revalidation |

If your provider supports webhooks, use them for the fields that drive user trust. Price changes and status transitions matter more than cached lot size. If webhooks are not available, shorten TTLs only for the slices that change often. Avoid invalidating the whole property object when one field changes if your cache design lets you split it.

Use ISR where pages are read far more than they change

Listing detail pages and neighborhood pages are good ISR candidates when public traffic is high and edits are relatively infrequent from the app’s point of view. That keeps TTFB predictable without forcing a live upstream fetch on every request.

Basic pattern:

export const revalidate = 600

That works for many detail pages, but it is only a starting point. If your app displays fields that change often, pair ISR with on-demand invalidation so a listing update can refresh the page immediately instead of waiting for the next interval.

Use Redis when many users request the same data

Redis helps when expensive upstream requests repeat across sessions. Good candidates include:

- property detail payloads

- normalized search results for common filter sets

- neighborhood summaries

- valuation snapshots for internal tools

Normalize the cache key before writing it. Query strings with the same meaning often arrive in a different order, and that creates accidental cache misses. Sort filter params, remove defaults, and convert arrays into a stable representation before generating the key.

The cheapest API request is the one your app never makes. The second cheapest is the one your server makes once.

Reduce payload size before caching

Smaller payloads are easier to cache, cheaper to transfer, and simpler to invalidate. If a RESO-compatible endpoint supports field selection, request only the fields the current view needs. A search card does not need ownership history. A detail page usually does not need every image size variant or every agent metadata field.

I would also avoid caching raw provider responses unless you have a specific operational reason. Raw payload caches look flexible early on, then turn into a maintenance problem. They consume more storage, make invalidation harder, and let provider-specific field names leak deeper into your app than they should.

A better pattern is to cache normalized objects at the boundary where you already map provider data into your own types. That keeps the frontend stable even if you switch vendors, add a second provider, or get a schema change in production.

Understanding MLS, IDX, and RESO API Compliance

A lot of first real estate API projects break here. The data loads, the search page works, and then QA or legal finds missing attribution, stale listings, or fields your agreement does not allow you to show. By that point, the fix is no longer a small UI patch. It reaches into your fetch layer, your cache policy, and your component props.

What MLS and IDX mean in practice

An MLS is usually the upstream system behind active listing data in the U.S. IDX is the rule set that governs how approved participants can display part of that data on public websites. For a Next.js app, that affects more than the API contract. It affects page design, rendering rules, and what data can safely reach the browser.

That usually means four implementation constraints show up early:

- Attribution requirements: office, broker, or MLS credits must appear in specific views

- Display limits: some fields can be stored for search or internal logic but not exposed publicly

- Refresh obligations: listing status and price changes often need a defined update cadence

- User access differences: public visitors, signed-in users, and internal staff may not be allowed to see the same data

If you skip those constraints in your first schema draft, you often end up refactoring later. A flat Property type with every upstream field exposed everywhere is convenient at the start and expensive to clean up.

Why RESO matters technically

RESO Web API gave developers a cleaner path than older MLS integrations. The RESO Web API is a RESTful protocol built on OData, mandated by the National Association of REALTORS® for REALTOR®-owned MLS organizations, with full implementation required by June 30, 2016, according to NAR’s RESO policy documentation.

The practical benefit is standardization. Shared field definitions and query patterns reduce one-off adapter code, especially if your app may touch multiple MLS sources over time. You still need a provider-specific mapping layer, but you are less likely to spend days reconciling basic concepts like list price, status, or bedroom counts across feeds.

For a React and Next.js stack, OData-style filtering and field selection also change how you design data fetching. You can request narrower payloads for cards, broader payloads for detail pages, and keep your server components or route handlers focused on view-specific data needs.

What compliance changes in your codebase

Compliance belongs in the architecture, not in a launch checklist.

A production app usually needs explicit handling for displayable versus non-displayable fields, auditability around refresh timing, and a clear boundary between server-only data and browser-safe props. In practice, that often means adding metadata to your internal model or mapper layer so components cannot accidentally render restricted fields.

I usually treat this as a policy problem expressed in code. A simple example is keeping private, contractual, or operational fields out of the UI model entirely:

- ingest upstream listing data on the server

- map it into an internal normalized record

- derive a public display model with only approved fields

- render React components from that display model, not from the raw provider response

That extra layer feels slower on day one. It saves time the first time an MLS rule changes or a second provider enters the project.

RETS versus RESO

If you run into RETS, assume higher integration cost until proven otherwise. Legacy RETS feeds often come with older sync patterns, more custom parsing, and more operational edge cases around ingest and normalization. Teams still support it because the business has to, not because it is pleasant to build against.

RESO is usually the better fit for a modern Next.js application. It works better with typed API clients, selective queries, and a cleaner server-side integration model.

Compliance is part of the application architecture. If the product depends on MLS-backed data, legal rules and UI rules are part of the same implementation.

Decision Framework for Selecting Your API

There isn’t one best real estate api. There’s a best fit for the product you’re shipping right now. If you force an enterprise-grade dataset into a small MVP, you slow delivery. If you build a public listings portal on a lightweight public-record API, you’ll hit product gaps immediately.

Use these questions to narrow the choice.

Start with the user-facing feature

Ask what the user is doing on the page:

- Searching active homes? You likely need MLS-connected listing data.

- Checking estimated value? A valuation-focused provider may be enough.

- Researching owners, deeds, tax history, or off-market properties? Public-record depth matters more.

- Building internal dashboards? Display restrictions may be less important than breadth and normalization.

Then evaluate implementation cost

Some APIs look affordable until you count engineering time. Ask:

- How clean is the response shape?

- Will the team need a heavy adapter layer?

- Can you cache safely?

- Does the provider support the geography and fields your UI requires?

- Is the legal path clear for your app model?

A provider with slightly higher direct cost can still be the cheaper choice if integration and maintenance are cleaner.

A practical shortlist approach

For a junior team shipping fast:

- Pick one primary use case.

- Choose the provider whose strength matches that use case.

- Define your internal

Propertymodel before building pages. - Build one search route and one detail route.

- Test compliance and caching before expanding features.

If the product needs both listings and rich historical records, assume you may end up with more than one upstream source. That’s normal. The important part is to hide that complexity behind your own API boundary.

Frequently Asked Questions About Real Estate APIs

Can you access MLS data without being a licensed agent?

Sometimes, but usually through an approved relationship, vendor, brokerage, or MLS-compliant platform rather than as a completely independent public scrape or generic feed purchase. The exact path depends on the MLS and your business model. Treat this as a partnership and compliance question, not just a technical one.

Should you use RESO or RETS for a new project?

Use RESO if you have a choice. RETS is legacy infrastructure. New development effort is better spent on a standards-based path that works with modern HTTP tooling and cleaner field interoperability.

How do you normalize multiple providers into one app?

Create an internal domain model and write provider-specific mappers into that model. Don’t let React components know whether a field came from ATTOM, Zillow, a RESO feed, or something else. The component should receive a stable prop contract.

Should the frontend ever call the provider directly?

Usually no. Put your Next.js server between the browser and the provider. That gives you secure credential handling, response shaping, retries, logging, and caching.

What’s the biggest beginner mistake?

Building UI directly on top of upstream payloads. It feels faster at first, then breaks when fields are optional, renamed, or inconsistent across endpoints.

When should you add Redis?

Add it when repeated server-side requests for the same resource start driving cost or latency. Don’t add it because it sounds enterprise. Add it because your request patterns justify it.

If you’re building with React and Next.js and want more practical integration guides like this, Next.js & React.js Revolution is worth bookmarking. It’s a strong resource for developers who want clear tutorials, production-minded patterns, and the kind of frontend and full-stack guidance that helps teams ship instead of just prototype.

Add Comment